running touhou ufo (x86_32) on an orange pi 5 (arm_aarch64) in kiosk mode

preface / intro

hello friends hello friends and hello friends! i hope you've been doing well, it's been like a month since i spoke at cyphercon about dealing drugs, did you guys enjoy that? i hope so!

regardless, in the past i've usually thought of blog posts before i actually started working on them - and that's annoying, and a tad forced. i think it'll be a lot more fun just talking about some of the things that're going on in my computer life.

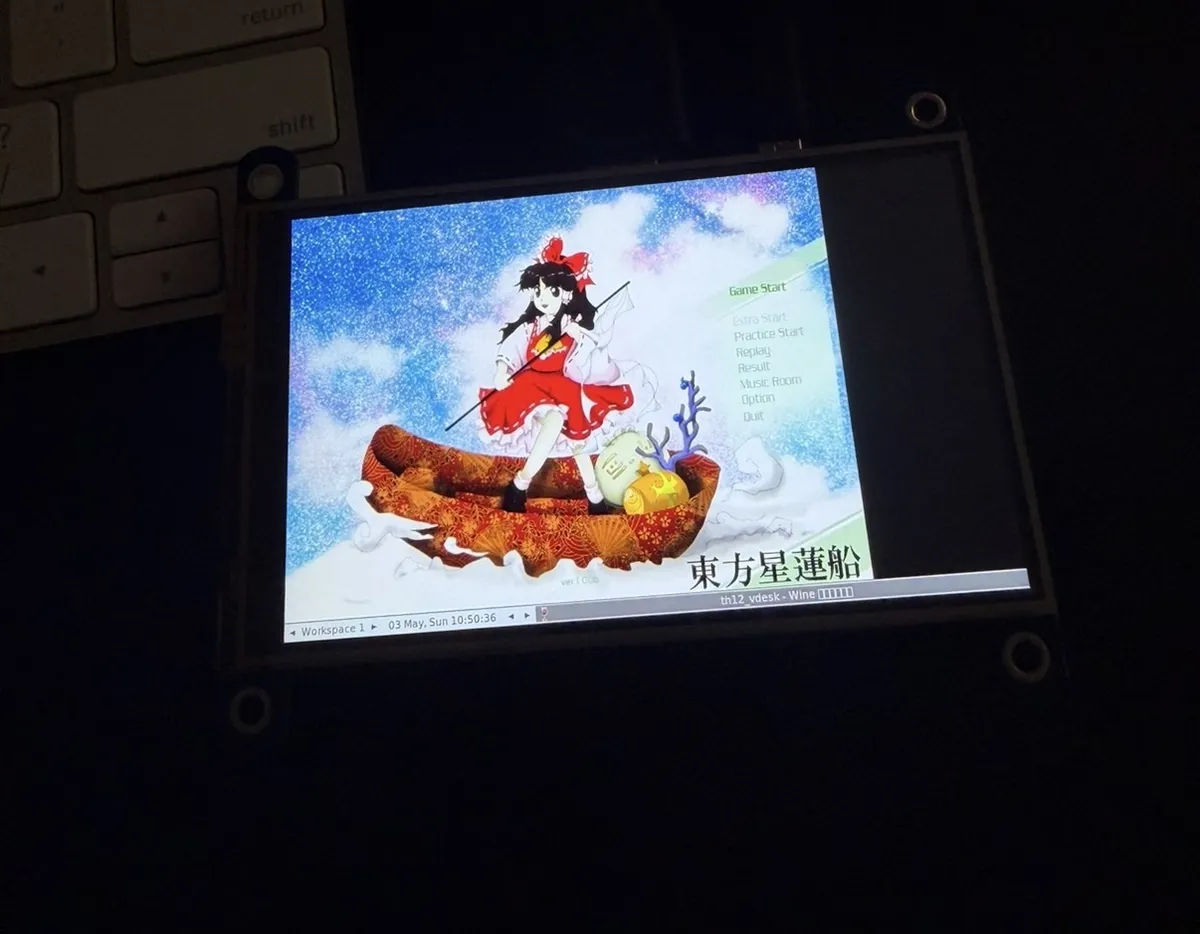

in preparation for a (secret thing) i (hopefully) have coming up in 2027, i recently got touhou 12: undefined fantastic object running on an orange pi 5 (referred to as opi / opi5 for the rest of this post) that i had just picked up.

tbh, it has been awhile since i've actually messed around with operating systems and architecture translation on a level like this (sbcs are not my strong suit by any means) - so this was an overall nice experience to have in the end. also thank you claude. you are actually the goat (and my greatest enemy). this would not have been possible without you. also for the maintainers for thcrap, the touhou modding community is also goated, and made some of the later steps a lot faster.

some more introductions

okay so i can't say exactly why im doing this which sucks a lot of the life outta this blog, but i'll start with where we're at:

- touhou 12 is a bullet hell / shoot em' up that released in 2009 for windows. since it was 2009, the executable only shipped as 32 bit (x86)

- while that's fine for 2009, it's 2026 and we'd like to run this game on an orange pi, an sbc that runs arm (aarch64)

- outside of the architectures being different, we also run into the problem of the utilization of direct3d 9, we need to render the graphics somehow

- but again, we're running this on an opi. is the user supposed to plug in a kbm? manually start it up? the base os doesn't have a gui

- the purpose of this machine is to play touhou, i don't want to click through buttons every time i start up? how do we solve that?

- so this is a touhou machine, but we're running a game built for windows desktop. how do we optimize it to be playable? modification? re? injection? ???

wow. that's a lot of questions to answer! but before any of that, we should answer: can you even render d3d9 on an arm chip?

p.s. before we really start diving in, the full code is listed on my github here: touhou-kiosk, but i recommend reading the blog before anything.

bootstrapping

although i wish we could just run wine n call it a day, we can't. before anything else, let's use some diagnostic harnesses to validate wine's capability to run d3d9 before i dig myself into a deep hole im too stubborn to leave.

starting w/ number 1: d3d9_init.c.

straight-forward, get a handle to the direct3d runtime, enumerate the adapter, check its display mode + capabilities, then create the rendering device.

i won't step through every bit of the code here, but i'll show the gpu's creation:

UINT n = IDirect3D9_GetAdapterCount(d3d);

LOGF("[INFO] adapter count = %u\n", n);

D3DADAPTER_IDENTIFIER9 id = {0};

HRESULT hr = IDirect3D9_GetAdapterIdentifier(d3d, D3DADAPTER_DEFAULT, 0, &id);

if (FAILED(hr)) {

LOGF("%s GetAdapterIdentifier hr=0x%08lx\n", FAIL, hr);

} else {

LOGF("%s adapter Driver=\"%s\" Description=\"%s\" VID=0x%04lx DID=0x%04lx\n",

PASS, id.Driver, id.Description, id.VendorId, id.DeviceId);

}

in D3DADAPTER_IDENTIFIER9 id, we zero-init a ~1100 byte output struct on the stack, then we call the idirect3d9::getadapteridentifier method with four arguments: d3d: the direct3d 9 instance, d3dadapter_default: the primary/default gpu, 0: flags, passing 0 just skips any, then &id: the out-param, with wined3d filling the rest of the struct in its place.

if the init program passes without issue, the d3d9 device creation will occur and ensures that it's healthy end-to-end through the entire stack.

then we have number 2, d3d9_render.c.

this is essentially just a test that will create a render loop and report the frames per second (fps) that the d3d9 emulation is currently running under. it's not necessary in the overall project but it helps answer the question of "is this possible" without trying to mess around w/ a hopeless binary.

for (int f = 0; f < frames; f++) {

/* Pump messages so the window stays responsive to the WM. */

MSG msg;

while (PeekMessageA(&msg, NULL, 0, 0, PM_REMOVE)) {

if (msg.message == WM_QUIT) { f = frames; break; }

TranslateMessage(&msg);

DispatchMessageA(&msg);

}

DWORD bg = D3DCOLOR_XRGB((f * 3) & 0xff, (f * 5) & 0xff, (f * 7) & 0xff);

IDirect3DDevice9_Clear(dev, 0, NULL, D3DCLEAR_TARGET, bg, 1.0f, 0);

if (SUCCEEDED(IDirect3DDevice9_BeginScene(dev))) {

VTX v[6];

float cx = 320.0f + 100.0f * (float)((f % 60) - 30) / 30.0f;

float cy = 240.0f;

float s = 50.0f;

DWORD c = D3DCOLOR_XRGB(0xff, 0x40, 0x40);

v[0].x = cx - s; v[0].y = cy - s; v[0].z = 0; v[0].rhw = 1; v[0].color = c;

< SNIP >

v[5].x = cx - s; v[5].y = cy + s; v[5].z = 0; v[5].rhw = 1; v[5].color = c;

hr = IDirect3DDevice9_DrawPrimitiveUP(dev, D3DPT_TRIANGLELIST, 2, v, sizeof(VTX));

if (FAILED(hr)) draw_failures++;

IDirect3DDevice9_EndScene(dev);

}

hr = IDirect3DDevice9_Present(dev, NULL, NULL, NULL, NULL);

if (FAILED(hr)) present_failures++;

else actually_drawn++;

}

this snippet accomplishes four things:

- the win32 message pump: drains the os event queue so no windows are marked as "hung"

- per-frame state change: ask d3d9 to fill the back buffer with a color that changes every frame

- the draw: allows d3d9 to draw a moving red square through the six vertices that're passed along

- the flip: once the buffer is finished, push to the screen, crossing the entire translation stack

the goal of these two programs was to test the potential to emulate touhou (th12) in an arm environment.

the cpu side: hangover wine + box64

d3d9 init + render came back good on the opi, we can render an arbitrary d3d9 frame. so let's get started getting the actual game running.

the opi has an rk3588s board, four cortex-a76 + four cortex a55, mali-g610, we have the capability to run a game that can handle multiple objects moving across the screen in 'independent' directions, but how do we approach the translation of x86 → arm for touhou?

to start, we need a middle layer that does the instruction translation, i.e. a dynarec. it'll read x86 instructions at runtime and will emit the aarch64 equivalent instruction, caching hot translations as it goes.

wine on aarch64 does not ship a dynarec natively, but it does have the support. modern wine new-wow64 inherits microsoft's windows-on-arm architecture, where x86 translation is delegated to a named dll at a set path that wine expects it at. if you don't have the correct dll in that slot, wine will show failed dlopen calls and abort:

warn:file:NtCreateFile L"C:\windows\system32\wowbox64.dll" not found

warn:module:load_dll Failed to load module wowbox64.dll; status=c0000135

after this: wine exits with code 53. th12.exe never executes a single instruction. c0000135 is status_dll_not_found. wine has the slot, but it's empty.

so, something needs to fill those slots:

- hangover wine 11.4

- mainline wine works as a host on aarch64, but its empty cpu-translator slot is fatal for x86 executables. but we have hangover to solve this. hangover is a wine fork that ships two dynarec implementations pre-installed in the prefix, plus a handful of patches that smooth over the integration. drop-in for our purpose here.

- box64

- box64 is the dynarec that's actually doing the work. every x86 instruction that th12 executes, will be recompiled into aarch64 by box64 as it executes. hangover ships this as

wowbox64.dll, prepopulating the slot at*/usr/lib/wine/aarch64-windows/, so when the loader does its translator probe at startup - it'll find that dll on the first hit - box64 operates as a jit: the first time a set of x86 instructions runs, box64 will translate it into native arm code and store the result/computation in its own code cache. subsequent instructions at the same x86 address will hit the code cache directly, so we can get close to native performance with these older games (this is why first boot/cold start takes a lot longer)

- box64 is the dynarec that's actually doing the work. every x86 instruction that th12 executes, will be recompiled into aarch64 by box64 as it executes. hangover ships this as

- wined3d

- this is the wine component that translates direct3d9 calls into opengl. this ships directly with wine, so no extra setup was needed

- mesa + panfrost

- mesa is an open-source mali gpu driver, the linux kernel ships the panfrost drm piece, mesa ships the userspace gallium driver that can actually compile the shaders. wined3d's opengl calls land here and are then subsequently processed by the board's gpu (mali-g610).

displaying & running the game

after getting the game to actually launch and pop the title screen, i was able to step through the menus, and eventually crash at the stage 1 load. debugging revealed a death call of d3derr_invalidcall, i.e. the application is calling for something that doesn't exist.

for awhile i was confused on what was actually missing, but it turned out to just be a display mode (whoops). the panel i was using to display touhou is a vertical 480x800 hdmi screen, and when wined3d enumerated display modes from x11/drm it got back a single-entry mode list with no 640x480 in it — so when touhou asked for 640x480 fullscreen at device-creation time, wined3d returned D3DERR_INVALIDCALL ("mode unsupported").

the fix was simply:

wine explorer /desktop=th12,640x480 /path/to/th12.exe

the /desktop= flag creates a wine virtual desktop window of the given size, then inside that virtual desktop will display the actual 640x480 window that the application initially requested. wined3d sees its own virtual desktop as the only display, stops querying the host x server for available modes, and reports "yes, 640x480 is supported." th12 stops getting refused at device creation, and the game will run with th12 believing it's in a 640x480 fullscreen display.

squeezing 640x480 into a 480x800 vertical panel

now the game runs in a 640x480 wine window, but the panel i'm running is a 480x800 vertical. on top of that, touhou playfield isn't 640x480 either (it's about 384x448) with the rest being general info/hud. since this is a kiosk and we're working with limited space, i wanted to focus on the gameplay only then add in my own overlay later.

xrandr can help us out here. the tool's --transform flag takes a 3x3 matrix that maps source frame-buffer(s) to output panel coordinates. after some trial-and-error, i landed on:

xrandr --output HDMI-1 --rotate left

xrandr --fb 640x480 --output HDMI-1 --rotate normal \

--transform 0.8,0,32,0,0.56,16,0,0,1

the order matters due to how wine reads data from its primary display and playing nice with how xrandr is rearranging the buffer reads, but i won't go too deep here.

the matrix 0.8,0,32,0,0.56,16,0,0,1 is in row-major form [a b c; d e f; g h i] and, per xrandr's convention, maps output panel coords back into source framebuffer coords (the inverse direction of how most people first read "scale" — for each panel pixel, the matrix tells the compositor where to sample in the fb). reading it that way:

- horizontal: each output pixel samples from

0.8 * panel_x + 32in fb space (so the visible region is the fb x-range[32, 32 + 0.8*panel_width], cropping the left UI column) - vertical: each output pixel samples from

0.56 * panel_y + 16in fb space (cropping top + bottom margins so the playfield fills the panel) - the bottom row is the homogeneous coordinate, leave it alone

result: the 384x448 playfield fills the 480x800 panel with the aspect roughly preserved, no black bars, score panel cropped out.

auto-advancing through the title menu

kiosk mode means: cold boot, no keyboard required, game in stage 1 within a minute. that means automatically clicking through the title screen → game start → rank select (normal) → player select (reimu) → weapon select (type a) → stage 1.

xdotool does the keypress part. but the menu nav was finicky in two ways:

th12's title menu cursor persists between launches.

killall -9 winedoesn't reset it. the cursor position lives next toth12.exeinscoreth12.dat(the high score file, which also stores the cursor position). so if your previous session ended on "practice start," your next cold boot lands on practice start, and your auto-zpress launches you into the practice stage select screen instead of the main game. fix: deleteth12.cfgandscoreth12.datin the launcher cleanup before every wine invocation. the cursor reliably starts on the "game start" option.multiple xdotool calls drop events. wine's keyboard grab on bare xorg (no window manager) is timing-sensitive. between two separate

xdotool keyinvocations the focus state can shift just enough that the second call's keypress lands in the void. fix: send the entire menu nav sequence in a single xdotool call:xdotool key --delay 250 z Down z z z z z z

confirm game start → switch easy to normal → confirm rank → confirm reimu → confirm type a → stage load

baseline measurement from a clean systemctl restart: about 23 seconds from service start to stage 1, measured by diffing systemd's Started timestamp against the first watcher_supervisor attempt=1 entry across 11 consecutive boots. most of that is wine boot + the zun ascii config dialog + the 6-second post-dialog settle wait that wine seems to need before keystrokes land reliably. the actual menu nav xdotool sequence is ~2 seconds, and stage load is another ~5 seconds.

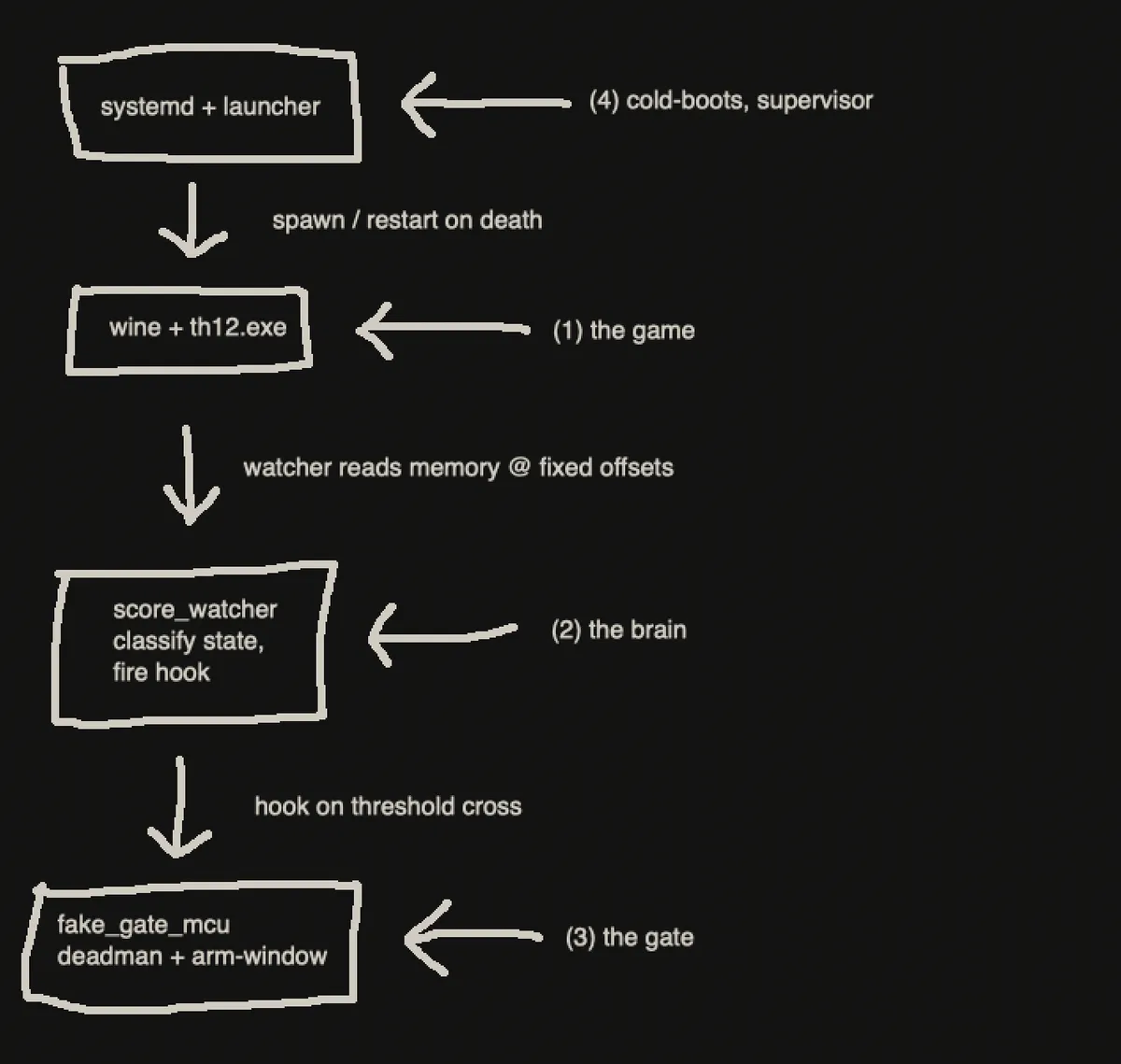

the architecture, at a glance

booting th12 is one thing; making a kiosk around it is another. before the next sections dive into specific daemons and recovery paths, here's the shape of the whole thing.

there are four moving parts on the running opi:

- the game —

th12.exeunder wine + box64. - the watcher (

score_watcher.py) — polls th12's memory every ~100 ms via/proc/<pid>/mem, classifies game state (playing / game-over / stuck / title-screen), fires actions when something needs to happen. - the gate (

fake_gate_mcu.py) — a separate python daemon talking to the watcher over a unix socket. owns the safety state machine for the hook (default-deny, heartbeat-deadman, single-arm-window) so that when hook eventually drives, it can't be left armed by a watcher crash. - the launcher + systemd plumbing — cold-boots the whole stack from power-on, supervises every other piece, recovers from every failure mode i could find.

data path. th12 has no aslr, so its score / stage / frame / lives / stage_struct_ptr all live at fixed offsets in the running process's memory. the watcher reads them through /proc/<pid>/mem like any other linux file.

control path. when the score crosses the configured threshold, the watcher fires a shell command (the hook), pauses the game with Esc, and shows an on-screen █████ flash for GATE_WINDOW seconds. the user signals a hit via a configured key (v by default → button_daemon → touches /tmp/score_hit); after the signal, or after the window expires, the watcher kicks off a stage reset.

reset ladder, three rungs, tried in order:

- stage_switch — inject a dll into th12.exe that calls th12's own stage-teardown + stage-init functions to swap to a different random stage in place (~5s).

- native restart — same DLL trick, restarts the current stage (~5s).

systemctl restart touhou-kiosk.service— cold-boots the whole kiosk (~23s).

recovery layers, three deep: if the watcher dies, a bash supervisor loop respawns it within seconds. if the launcher itself dies, systemd's restart=always brings the service back. nothing breaks the kiosk for more than ~30s.

the rest of this post walks through each piece in order: score watcher (how the brain reads state), cold boot (how the launcher comes up), dll injection (how stage_switch and native restart actually work), the bleedover (real open work), and the fuzz section (recovery validation).

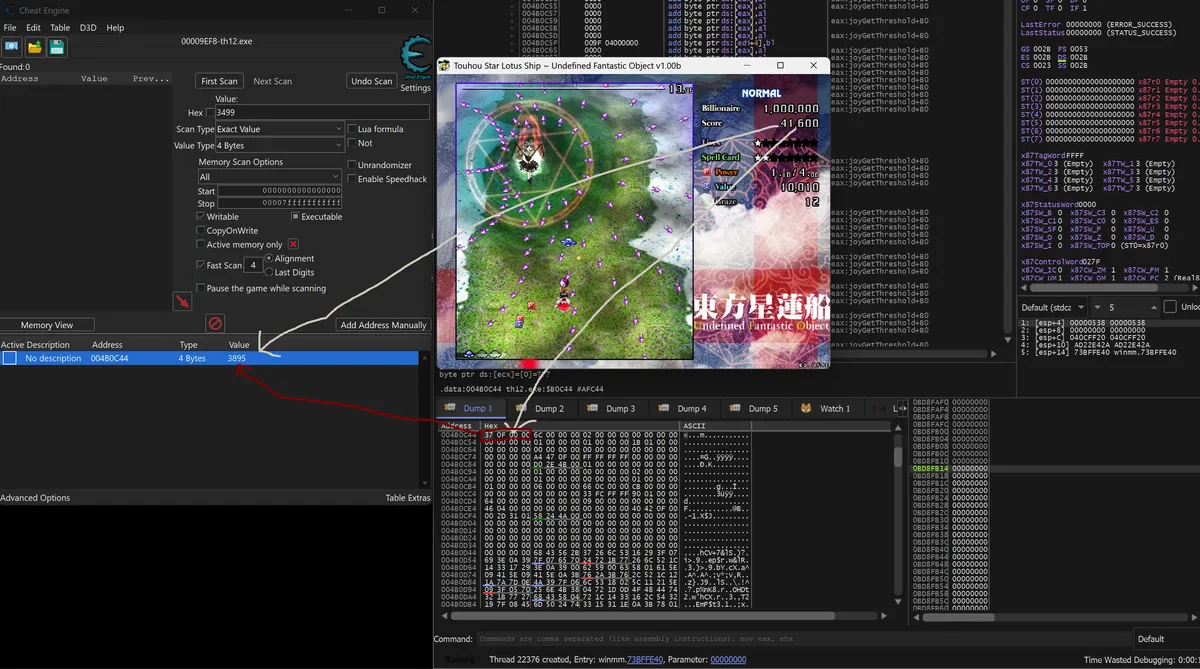

the score watcher

once the game runs reliably, the next question is: how does this thing know what's happening inside the game? current score, current stage, lives, the frame counter, whether the user is at game over or in a stage or paused. there are roughly two ways to do this: hook the game's d3d9 calls and ocr the rendered hud, or read the game's process memory directly.

linux has /proc/<pid>/mem, so we read directly.

reading process memory through the translation stack

th12.exe is a 32-bit x86 binary from 2009 running under wine 11.4 under box64's dynarec on aarch64 hardware. four layers between the original binary and the metal. but from the linux kernel's perspective, the wine process is just a normal linux process with a normal memory map, and /proc/<pid>/mem exposes that memory map without caring about what's running inside it. so we can read th12's virtual address space through linux's standard process-inspection mechanism, even though "th12" as an entity only exists as a translated representation inside the wine process.

on top of that, th12.exe has aslr disabled, so on a windows native install it lands at its preferred image base 0x00400000 every time. under wine64 + hangover + box64 on aarch64, the host process is 64-bit and the PE gets mapped into the low-32-bit region of that host — usually at 0x00400000, but the loader isn't strictly required to honor the preferred base. the reader code computes the actual mapped base from /proc/<pid>/maps and applies a delta (actual_base - 0x00400000) to every offset before reading.

the lack of aslr makes my life a lot easier, it means any offset i find on a windows native install of th12.exe is the same offset on the opi. i can run th12 on a windows host, attach cheat engine, scan for "score = whatever 4 byte value" until the address narrows to one candidate at 0x004b0c44, and that's the offset i use on the opi — add the delta from /proc/<pid>/maps, read through /proc/<pid>/mem. the windows-on-windows tools (ce, x64dbg, ida, ghidra) all work on the original executable; their findings transfer directly through the translation stack to the actual running process on the orange pi. this is a recurring theme in the rest of the post: x86 windows-native rev-eng tooling generates the answers, and we apply those answers to the live arm runtime via /proc/mem and dll injection.

you can verify any of them yourself in cheat engine in about a minute. spawn th12 in a windows VM, start a stage, attach ce, "first scan" for "exact value 4 bytes" with the visible score as the search target. you'll get a handful of candidates; let the score tick (kill some things), "next scan" for "increased value," repeat until only one address remains — 0x004B0C44. the value at that address is the score divided by 10 (the game multiplies by 10 for the displayed value).

| field | offset | notes |

|---|---|---|

| score | 0x004B0C44 | multiply by 10 for displayed value |

| stage | 0x004B0CB0 | 1-6, 0 = title menu / between stages |

| frame | 0x004B0CBC | per-stage frame counter, 60hz in stage |

| lives | 0x004B0CA0 | reimu's remaining lives |

| stage_struct_ptr | 0x004B44E8 | heap pointer to the live stage struct |

reading them on linux is a one-liner from python:

import struct

pid = ... # pgrep th12.exe

with open(f'/proc/{pid}/mem', 'rb', buffering=0) as f:

f.seek(0x004B0C44)

score = struct.unpack('<I', f.read(4))[0] * 10

the daemons

with the addresses verified, my buddy claude wrote a small constellation of cooperating processes:

- a score reader that prints a snapshot on demand (debug only)

- a score overlay built in tk that pins itself topmost over the wine window and shows the live score in white outlined text

- a score watcher that polls every ~100ms, makes decisions, and fires actions

- a button daemon that listens on evdev for a single configured key to trip the gate

- a gate bridge that owns a small safety state machine and is the only thing allowed to arm/disarm whatever the hook eventually drives

the watcher is the brain. it polls memory, classifies the current game state, and decides what to do.

the reset reason taxonomy

the watcher's core insight: there are several distinct reasons a stage might need to be reset, and each reason has different side effects. lumping them all into "just call systemctl restart" works but produces a bad ux (every reset takes ~23 seconds) and is wrong about what the user actually did. instead, every reset path is classified by reason:

- threshold — the user reached the score gate. fire the hook. flash █████ on screen. pause the game (esc). lock the user's keyboard. wait up to

gate_windowseconds for either a hit-file to appear or the window to expire. then restart the stage. this is the "user did █████" path. only this reason fires the hook. - gameover — lives dropped to 0 and the stage frame counter has been frozen for >2 seconds. game-over screen is up. silent restart. no hook. no UI.

- this is still a little buggy, hopefully will be fixed by the time the full project is ready

- stuck — the stage frame counter is frozen for >10 seconds while lives > 0 and stage > 0. likely a pause menu the user can't or won't dismiss. try sending escape three times to unstick; if still frozen, silent restart.

- score_frozen — the in-stage frame counter is ticking (game is alive) but the score hasn't changed in N seconds. likely a passive reimu sitting at the start of a stage doing nothing. silent restart.

- title_screen — the live stage_struct_ptr at

0x004b44e8reads as0for >5 seconds, which only happens on the title menu. user died, walked out through the menu, and is now sitting on the title screen indefinitely. silent restart so the kiosk drops them back into a stage.

the only reason that fires the hook is threshold. everything else is silent — firing the hook on a game-over event would be a security problem if the hook ever drives a real-world side effect.

a footnote on what actually ships: of those five, production today only runs threshold + title_screen. the other three (gameover, stuck, score_frozen) are gated behind a --threshold-only flag and stay armed but disabled. they kept false-firing during th12's own stage-load animations — the engine pauses its frame counter for several seconds while initializing a new stage, and that looks indistinguishable from a hung game to the stuck heuristic. every false-positive triggered another stage_switch, and back-to-back machine-driven switches turn out to be a problem in their own right. the heuristics stay in the codebase but ship gated off. title_screen is the one new path that's safe enough to leave armed because its signal — a single pointer at 0 — is binary and unambiguous.

the gate and the deadman

the hook the watcher fires when the threshold is crossed is whatever you configure it to be — a shell command, full stop. in this kiosk the configured hook is a python script (gate_bridge.py) that talks to a separate "gate" daemon (fake_gate_mcu.py) via a unix socket. the gate is the part of the system that takes that hook call seriously even when nothing physical is wired up yet:

- default deny. the gate starts disarmed. it only arms after receiving an explicit

arm <seconds>command. it disarms ondisarm, on a heartbeat timeout, or when the window expires. there is no "stay armed forever" state. - heartbeat deadman. while armed, the gate expects a

heartbeatcommand from the controller every <1.5 seconds. if heartbeats stop arriving, whether the controller crashed, socket disconnected, watcher died, the gate will auto-disarms within 1.5s. the watcher cannot accidentally leave the gate armed by exiting; the gate notices the silence and shuts itself off. - single arm window. the same

gate_windowenvironment variable controls both how long the gate stays armed (its permissive window) and how long the on-screen ui shows thehitflash (the user's perceived window). they have to be the same number, or the gate stays permissive after the UI says you've timed out.

GATE_WINDOW=5

HOOK="/home/ubuntu/touhou-kiosk/gate_bridge.py --socket /tmp/touhou_gate.sock arm-window ${GATE_WINDOW} --background"

${GATE_WINDOW} is expanded when the launcher sources /etc/default/touhou-kiosk, before the value is substituted into the hook string. now the two windows are guaranteed identical.

the gate runs as its own systemd service (touhou-gate.service) with its own restart policy, independent of the kiosk service. if the kiosk crashes and restarts, the gate keeps its connection open. if the gate crashes, the kiosk's hook calls fail. they're isolated by design.

cold boot to a stable state

the kiosk has to come up from cold metal, by itself, without any interaction from a user, every time the opi is power-cycled. and it has to stay up forever, recovering from every (probable) failure by itself.

the systemd unit

the whole kiosk runs as a systemd service. relevant bits of the unit file:

[Unit]

Description=Touhou TH12 kiosk (TH12 + score watcher + overlay)

After=multi-user.target

Conflicts=getty@tty1.service

ConditionPathExists=/home/ubuntu/touhou-kiosk/launch_th12.sh

StartLimitIntervalSec=300

StartLimitBurst=10

[Service]

Type=simple

User=ubuntu

Group=ubuntu

PAMName=login

TTYPath=/dev/tty1

TTYReset=yes

TTYVHangup=yes

StandardInput=tty

ExecStart=/home/ubuntu/touhou-kiosk/launch_th12.sh

Restart=always

RestartSec=3

a few notes:

conflicts=getty@tty1.service- by default ubuntu spawns a login getty on tty1. we want our server there instead. listing the getty as a conflict tells systemd to stop it before our service starts.ttypath=/dev/tty1+ttyreset=yes+ttyvhangup=yes- make the kiosk service own tty1 the way a normal login session would. needed because xinit insists on a controlling tty.restart=always,restartsec=3— if the launcher exits for any reason, systemd respawns it after 3 seconds. originally i hadrestart=on-failure, but discovered the launcher sometimes exits 0 (clean) when xinit dies —on-failuredoesn't restart on a clean exit.restart=alwaysfixes that.startlimitintervalsec=300+startlimitburst=10— these are restart-rate-limiting settings. interesting story: i initially put them in the[service]section, which is the wrong section. systemd silently ignores them there and uses its default (10-second window).systemd-analyze verifyprinted a warning that pointed me at the bug. they belong in[unit], the unit-level scope. now the kiosk can restart up to 10 times in 5 minutes before systemd refuses, which catches genuine crash loops without false-tripping during a fuzz run.

the launcher's cleanup phase

systemd starts launch_th12.sh. the launcher runs as ubuntu (with passwordless sudo), and the first thing it does is clean up any state left from a prior session:

sudo killall -9 wine wine-preloader wine64-preloader Xorg xinit \

explorer.exe wineserver wineboot.exe winedevice.exe \

services.exe plugplay.exe th12.exe start.exe \

th12_stageswitch_inject.exe 2>/dev/null || true

sudo -u ubuntu env WINEPREFIX="${WINEPREFIX}" \

"${HANGOVER_WINE%/wine}/wineserver" -k 2>/dev/null || true

rm -f /tmp/.X*-lock

rm -rf /tmp/.X11-unix/X* /tmp/.wine-*

rm -f "${GAME_DIR}/th12.cfg" "${GAME_DIR}/scoreth12.dat"

the menu nav retry loop

after launching wine + xinit, the launcher waits for the th12 zun ascii dialog to appear, dismisses it with xdotool key Return, sleeps 6 seconds for wine's keyboard grab to settle, then runs the menu nav xdotool sequence described earlier. after that, the launcher checks th12's stage memory address to see if stage 1 actually loaded:

SUCCESS=0

for attempt in 1 2 3; do

do_menu_nav

# poll up to ~15s for stage=1 + lives>0 + frame in [110,250]

for w in $(seq 1 150); do

sleep 0.1

read s f l <<< "$(read_stage_frame_lives || echo "")"

if [ "$s" = "1" ] && [ "$l" -gt 0 ] && \

[ "$f" -ge 110 ] && [ "$f" -le 250 ]; then

SUCCESS=1; break 2

fi

done

done

if [ "$SUCCESS" != "1" ]; then

echo "[!] menu_nav failed 3 times — restarting service" >&2

sudo systemctl restart touhou-kiosk.service

fi

three attempts at menu nav. we wait for stage=1, lives>0 and the per-stage frame counter to be in a window past stage-init noise but before the player could realistically have died. if all three attempts fail (typically because wine's keyboard grab dropped a key and the menu got stuck somewhere unexpected), the launcher calls sudo systemctl restart on its own service. that gives systemd a fresh cold boot at our request — wine prefix wiped, xinit re-launched, full retry from scratch.

worst-case time-to-stage-1 is therefore a couple minutes. it isn't provably bulletproof — startlimitburst=10 / startlimitintervalsec=300 will eventually give up if every restart hits the same wall — but in practice the bounded retry + service-restart escalation has been reliable across every boot i've measured & seen firsthand.

the supervisor loop

the launcher also runs the score watcher in a supervisor loop:

attempts=0

th12_gone_streak=0

th12_gone_first=0

while true; do

attempts=$((attempts + 1))

sudo "${KIOSK_DIR}/score_watcher.py" "$GAME" \

--threshold "$THRESHOLD" --hook "$HOOK" \

--gate-window "$GATE_WINDOW" --display :0 \

--state-file /tmp/touhou_state \

${TH12_NATIVE_RESTART:+--native-restart} \

${TOUHOU_THRESHOLD_ONLY:+--threshold-only} \

>>/tmp/watcher.log 2>&1

rc=$?

# rc=2 means watcher couldn't find th12.exe at startup.

# 3 consecutive rc=2 within 30s = th12 permanently dead.

now=$(date +%s)

if [ "$rc" = "2" ]; then

[ "$th12_gone_streak" -eq 0 ] && th12_gone_first=$now

th12_gone_streak=$((th12_gone_streak + 1))

age=$((now - th12_gone_first))

if [ "$th12_gone_streak" -ge 3 ] && [ "$age" -le 30 ]; then

# Backgrounded + exit so the unit's own ExecStart returns;

# a synchronous systemctl restart from inside the service

# would deadlock against systemd waiting for ExecStart to exit.

sudo systemctl restart touhou-kiosk.service &

exit 0

fi

else

th12_gone_streak=0

fi

# Tiered back-off: 2s for first 5 attempts, 10s through attempt 20, then 30s.

if [ "$attempts" -le 5 ]; then sleep 2

elif [ "$attempts" -le 20 ]; then sleep 10

else sleep 30

fi

done

the supervisor's job: if the watcher dies for any reason (sigsegv, unhandled exception, sigkill from a fuzz run, out-of-memory), respawn it on a tiered back-off, 2s for the first five attempts (fast retry on a transient crash), then 10s, then 30s (slow retry on a persistent failure so we don't fight a stuck game). if the watcher keeps exiting with rc=2 (th12.exe is not running) three times within 30 seconds, the supervisor backgrounds a systemctl restart and exits — the & + exit 0 is load-bearing, because a synchronous systemctl restart from inside the service unit would deadlock against systemd waiting for its own execstart to return.

this three-layer recovery (watcher → supervisor → systemd) is the whole game. every failure mode hits exactly one of those three layers, and one of them brings the kiosk back.

/etc/default/touhou-kiosk

all the user-tunable settings live in one file:

THRESHOLD=200000

GATE_WINDOW=5

HOOK="/home/ubuntu/touhou-kiosk/gate_bridge.py --socket /tmp/touhou_gate.sock arm-window ${GATE_WINDOW} --background"

TH12_STAGESWITCH=1

TH12_STAGESWITCH_STAGES=1,2,3,4,5,6

COLD_BOOT_RANDOM_STAGE=1

TOUHOU_THRESHOLD_ONLY=1

the launcher sources this file once at startup. you switch from debug to production by editing two lines (raise threshold, leave touhou_threshold_only=1) and systemctl restart touhou-kiosk. the single-source pattern (e.g. gate_window referenced from hook) prevents the gate-window mismatch failure mode entirely. touhou_threshold_only is the flag that gates off the heuristic reset reasons discussed in the watcher section, production runs with it on because the heuristics false-fire on th12's normal stage-load pauses.

dll injection: a consistent path back to stage 1

cold boot to stage 1 takes ~23 seconds. which is fine for the initial boot, it is not fine for "every time the user reaches the score threshold." an unplayable 23-second pause every cycle would kill ux (and rly annoy me).

so the watcher tries a tiered "reset ladder" for every reset, taking the fastest path that works:

- stage_switch via dll injection (~5 seconds): inject a dll that calls two functions inside th12.exe to tear down the current stage and start a different one. randomizes which stage is up next, every reset is a fresh stage. this is the production primary rung.

- native restart via dll injection (~5 seconds): same trick, but starts the same stage back up rather than switching to a new one. used when stage_switch is disabled or its hook didn't fire.

sudo systemctl restart touhou-kiosk.service(~23 seconds): full restart, essentially a cold-boot w/o a hot cache.

if the fast one fails, the watcher falls through to the next. each step has bounded timeout — happy-path numbers above are best-case, and the dll-injection rungs have a 20-second failure timeout each on top. realistically, by the time we reach systemctl restart the user has waited up to ~45 seconds across the prior two rungs failing in sequence, which is rare, but recoverable.

let me walk through rung 2 (native restart), because it's the simplest case to explain — and rung 1 is just rung 2 plus a stage-number tweak plus a couple of bleed-fix writes (described in the next section).

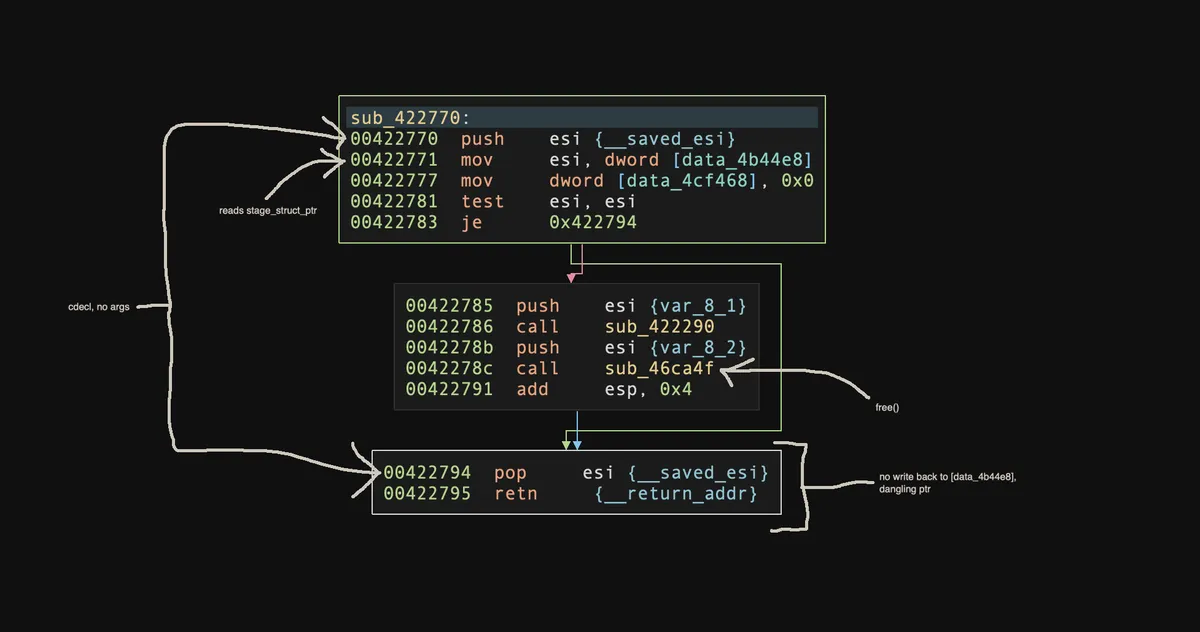

th12's task system

th12 has an internal task system, each long-running activity inside the engine is registered as a task with an entry-point function pointer. the engine's main loop iterates the task list and invokes each entry point. stages are tasks. the title menu is a task. the pause menu, the replay system, etc. etc.

looking at the binary statically (through whatever tool you like), the stage-init flow looks like:

| address | what |

|---|---|

0x422770 |

stage teardown (cdecl, no args). tears down whatever stage is currently live. |

0x422700 |

stage init wrapper (stdcall, 1 arg). allocates a new stage struct, sets up the task entry, registers the trampoline. |

0x422280 |

the trampoline. jmp 0x421cd0. |

0x421cd0 |

the actual stage-init worker. |

worth noting, th12's "tasks" are real os threads. each task is its own thread spawned at registration time, and the engine's main loop is doing thread-coordination rather than a callback iteration.

the only one of the four that's worth pulling up visually is the teardown at 0x422770, because it surfaces a detail that drives everything that comes next. the whole function is 38 bytes:

two things to point out. one, the calling-convention claim in the table is just visible on inspection: callee-saved esi is push/pop'd around the body, nothing reads from [ebp+8] style argument slots, the epilogue is a plain pop esi; retn with no add esp, N cleanup. cdecl, no args. the function pulls its target from the global at [0x4b44e8] — the same stage_struct_ptr offset documented in the score-watcher's address table earlier in the post. it then dispatches a per-struct destructor (sub_422290, stdcall, callee-cleans) and thunk of free() (sub_46ca4f, cdecl with one arg, no return value used.

two, look at what's pointedly missing: there is no mov [data_4b44e8], 0 after the free. the function releases the stage struct's heap memory but leaves the global pointer dangling at the just-released address. if the engine ran another frame at that moment, it would dereference freed memory. the reset recipe has to fire a single uninterrupted sequence on the main thread.

reset-from-in-stage is mechanically simple: call 0x422770 to tear down the current stage struct, then call 0x422700(0) to allocate a fresh one — and 0x422700 is the function that writes the new allocation back into [0x4b44e8], closing the dangling-pointer window. it assembles to exactly 21 bytes of x86:

b8 70 27 42 00 mov eax, 0x422770 ; teardown addr

ff d0 call eax ; tear down current stage

6a 00 push 0 ; arg to init wrapper

b8 00 27 42 00 mov eax, 0x422700 ; init wrapper addr

ff d0 call eax ; allocate new stage

33 c0 xor eax, eax ; return 0

c2 04 00 ret 4 ; stdcall, pop the 1 pushed arg

run those 21 bytes and the new stage allocates, the engine picks up the new callback, frames start ticking. from "trigger" to "stage 1 frame 1 ticking" w/ no menu transition, no zun ascii dialog, nothing.

the issue: these 21 bytes have to fire on the main game thread, between frames. partly because the d3d9 device state they indirectly touch is single-threaded and gets wedged by cross-thread calls, but mostly because of the dangling-pointer window described above. anything that runs between the teardown and the init on the main thread reads freed memory and crashes the engine.

the obvious approach is to inject the shellcode directly: virtualallocex a scratch page in th12, writeprocessmemory the 21 bytes in, createremotethread with the page address as the thread start. that was the first thing tried, it didn't work for two compounding reasons. first, the spawned thread isn't the main game thread — it's a fresh worker the os just allocated, and the d3d9 teardown call from the wrong thread wedges the engine immediately. second, the standard rescue (ptrace + setthreadcontext to detour the existing main thread to the scratch page) is doubly unavailable: wine restricts cross-process setthreadcontext deliberately, and even if you bypassed that, under the box64 dynarec the "thread context" ptrace_getregs returns is arm64 x0..x30, pc, sp, not x86. there is no x86 eip to set. the live program counter is somewhere inside box64's jit dispatch, not at any x86 instruction we could redirect.

so shellcode is the wrong shape. we need the calls to fire on the main game thread without inventing a new thread or hijacking the existing one, which means hooking into a callback the main thread itself will run on its own, on its own schedule.

wh_getmessage on the main thread

the way you do this on windows native is well-trodden: inject a dll, install a setwindowshookexa(wh_getmessage, ..., main_tid) thread-targeted hook, and the moment the main thread picks up the next message, your hook fires on the main thread, between frames. perfect place to do the two calls.

doing this on wine takes one extra trick. wine permits createremotethread + loadlibrarya for cross-process injection. that's how everything from ce's wine fork to wine's own native dll loader works. wine does not permit (most) thread-related winapi calls from cross-process, those are the apis that ptrace-like debuggers want, and wine restricts them deliberately. so the trick is: do the injection cross-process (allowed), then inside the injected dll, do everything else (in-process, all allowed). once the dll is loaded into th12's address space, you're "th12" as far as the operating system is concerned, and wine's cross-process restrictions don't apply.

a sketch of what the dll does inside dllmain:

// All running inside the wine process now, no wine restrictions apply.

HWND hwnd = FindWindowA("BASE", NULL); // th12's window class

DWORD main_tid = GetWindowThreadProcessId(hwnd, NULL);

g_hook = SetWindowsHookExA(WH_GETMESSAGE, MyHookProc, hModule, main_tid);

PostMessageA(hwnd, WM_NULL, 0, 0); // poke the queue

// Wait a few ms for the hook to fire on the main thread.

// (the hook proc does the actual call to 0x00422770 / 0x00422700)

UnhookWindowsHookEx(g_hook);

FreeLibraryAndExitThread(hModule, 0);

the hook proc itself is fifteen lines:

static LRESULT CALLBACK MyHookProc(int code, WPARAM w, LPARAM l) {

if (code >= 0 && InterlockedCompareExchange(&g_done, 1, 0) == 0) {

DWORD stage = *(volatile DWORD*)0x004B0CB0;

DWORD sptr = *(volatile DWORD*)0x004B44E8;

if (stage >= 1 && stage <= 7 && sptr != 0) {

((void(__cdecl *)(void))0x00422770)(); // tear down

((void*(__stdcall*)(unsigned))0x00422700)(0); // init new

}

}

return CallNextHookEx(g_hook, code, w, l);

}

measured fire time on windows native: ~10 ms from createremotethread returning to the hook running its two calls. on wine on the orange pi, also ~10 ms — the v4 dll logs the wait inline as worker waited_ms and reads 0x0a per fire on the kiosk, matching the windows-native figure. the in-process frame counter at [0x4b0cbc] keeps advancing at 53–60 fps across the reset window, and there's a single-frame hitch when the teardown + init runs, then frames resume. (53–60 is the frame-counter-throughput-via-/proc/mem measurement; i don't have an independent rendered-fps probe, but the on-screen game is visibly smooth and the counter rate matches steady-state gameplay, so the rendering pipeline isn't stalling.)

this whole approach is the windows-native re → arm runtime bridge in action. the function addresses (0x00422770, 0x00422700) came from disassembling th12.exe on the mac. the hook mechanism (wh_getmessage) is plain win32. the dll itself was cross-compiled with i686-w64-mingw32-gcc on the opi. and the whole thing runs through box64's dynarec on a cortex-a76. windows tooling produces the capabilities, while wine runs them on arm.

the third-rung fallback: systemctl restart

what if the dll injection fails — wine itself wedged, dll fails to load, the hook never fires? the watcher times out after 20 seconds and falls through to sudo systemctl restart touhou-kiosk.service. that's the bombproof path: the unit file's cleanup phase wipes the wine prefix, killalls any stragglers, and cold-boots the whole stack. ~23 seconds visible to the user, but it always works.

three rungs. each with bounded timeout. the watcher always recovers eventually.

bloom of doom

the dll-injection rung works mechanically — the wh_getmessage hook fires on the main thread within ~10ms (the wine-on-opi figure from the previous section), the teardown call returns, the init call returns, frame counter ticks forward, score reads 0, stage reads the new value. by every memory-based criterion the reset succeeded.

the problem here, visually, is that stage-switching leaves a uniform green/cyan tint over the entire playfield of the new stage, sometimes. the tint matches the previous stage's color palette: switch from stage 3 (bright sky) into stage 6 (dark ufo interior) and stage 6 looks like it's underwater. switch from stage 1 into stage 4 and stage 4 inherits a haze.

the first hypothesis was the gpu pipeline: wine's wined3d backend talks opengl, mali's panfrost driver does tile-based deferred rendering, the dll injection runs the teardown+init pair between frames, and i thought we were outpacing some tile cache that hadn't fully evicted previous-frame content. i spent a lot of time w/ pan_mesa_debug tunables, dxvk experiments, with all of it being the wrong layer to focus on.

what (embarrassingly) took me way too long to notice, is that the the bloom isn't a bug. when you die in a stage and sit there at the continue prompt without doing anything, the same green/cyan tint from the enemy projectiles hang in the background. and walking out of game-over via the natural pause → retry path clears it completely. the gpu pipeline has nothing to do with what happens when a player navigates a menu, the engine itself was clearing state on the menu path that the dll injection isn't.

the actual issue is that stage_switch fires from inside the paused state. the watcher's flow is: cross threshold → press esc to pause the game → flash █████ → fire the hook → call the dll. by the time the dll's teardown+init runs, th12 is already in pause-render mode. the dll hops straight to stage_teardown → stage_init and never tells the engine to leave pause-render. the new stage allocates and starts ticking while the engine is still rendering as if paused, so the previous state's bloom will never clear.

i went chasing the data-side residue before i figured this out. swapped to my windows host and had claude write a harness that drove the actual esc+up+z+up+z keystrokes on a windows-native th12 via an idirectinputdevice8 vtable hook, snapshotted th12's .data section before and after the retry, and produced a 289-dword diff. three deltas stood out:

[0x004CF2A8] = 0xFF000000 (dirty) → 0x00000000 (clean) bloom clear color

[0x004CF3FC] = 0x00000001 (dirty) → 0x00000000 (clean) render flag

[0x004CE000..0x004CE56C] populated (dirty) → all zero (clean) pause-capture JPEG buffer

v4 of the dll wrote those globals to their "clean" values from inside the hook. log on the kiosk confirmed every write landed:

HOOK pre bcc,flg,jpeg0,jpeg4 ff000000 00000001 ffd8ff00 00003cff ← dirty

RT ColorFill rt 4cea94..98..9c hr=00000000 ×3

HOOK post-fix bcc,flg,jpeg0,jpeg4 00000000 00000000 00000000 00000000 ← clean

didn't change a thing visually 👎, which lines up with the right diagnosis. the bloom isn't held on by data-side globals. it's held on by the d3d device state that wined3d carries on the linux side. natural retry issues idirect3device9 method calls (clear, setrenderstate, etc.) as part of its pause-exit walk, and those reset wined3d's view of the world. the dll skips all of that. snapshotting /proc/<pid>/mem captures th12's view, it doesn't capture wined3d's.

the real issue here from my pov isn't "clear the bloom," it's "drive whatever pause-exit sequence the natural retry runs, before firing teardown+init."

and to be fair, i have no idea how to do it. i tried guys, i gave up sorry.

for now i'm accepting it: rapid stage_switches from the pause modal carry the pause bloom into the next stage, it's ugly. so instead, i'll just keep the bodged esc+up+z+up+z ahk-type sequence.

p.s. the art of the bodge (linked video) is a top-three all timer on youtube, please watch fr

the end (for now)

the next phase for this project is █████, we can talk about that come 2027 :)

but, the software side is done: cold boot to playable th12 in about 23 seconds, the relevant kiosk telemetry exposed (live score/stage/frame/lives via /proc/mem, watcher + supervisor logs, gate audit log, /tmp sentinel files for state), configurable hook fires on score events, software gate-bridge stub with default-deny + heartbeat deadman safety ready for whatever the hook eventually drives, self-healing through every failure mode i could find, dll injection-driven fast restart at ~5 seconds (with the visual-bleed caveat above), full systemctl-restart fallback when anything goes sideways.

i will see u soon my friends, also im sorry that the text in the images is super small, i'll make the text bigger next time :(

if there's anything you'd like to talk about, post-related or not, hit me up!

-connor / ret2c